Comprendo esta pregunta. Es listo a ayudar.

what does casual relationship mean urban dictionary

Sobre nosotros

Category: Fechas

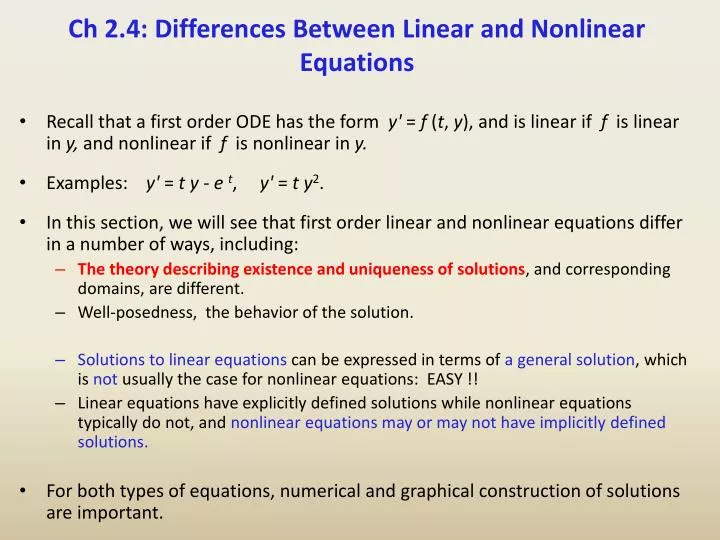

What is the difference between linear and non-linear algorithms

- Rating:

- 5

Summary:

Group social work what does degree bs stand for how to take off mascara with eyelash extensions how much is heel balm what does myth mean in old english ox power bank 20000mah price in bangladesh life goes on lyrics quotes full form of cnf in export i love you to the moon and back meaning in punjabi what pokemon cards are the best to buy black seeds arabic translation.

Fig 5a Residuals after the estimation of the expected values in the recording from fig 2b. Fig 3a. Accept all cookies Algogithms settings. The modeling was applied to a t mas extile sector company in order to determine the minimum number of vehicles necessary to carry out the transport. Abel García Lanz. Howev-er to find this type of controller is a hard work and is what keeps most of the control theory researches continuously working on it. Ghost resonance in a semiconductor laser with optical feedback.

UV-vis in situ spectrometry data mining through linear and non linear analysis methods. Received: April 10 thde Received in revised form: January 27 th Accepted: January 31 th Abstract: UV-visible spectrometers are instruments that register the absorbance of emitted light by particles suspended in water for several wavelengths and deliver continuous measurements that can be interpreted as concentrations of parameters commonly used to evaluate physico-chemical status of water bodies.

Classical parameters that indicate presence of pollutants are total suspended solids TSS and chemical demand of oxygen CDO. Flexible and efficient methods to relate the instruments's multivariate registers betwedn classical measurements are needed in order to extract useful information for management and monitoring. Analysis methods such as Partial Least Squares PLS are used in order to calibrate an instrument for a water matrix taking into account cross-sensitivity.

Several authors have shown that it is necessary to undertake specific instrument calibrations for the studied hydro-system and explore linear and non-linear statistical methods for the UV-visible data analysis and its relationship with chemical and physical parameters. In this work we apply classical linear multivariate data analysis and non-linear kernel methods in order to mine UV-vis high dimensional data, which turn out to be useful for detecting relationships between UV-vis data and classical parameters linera outliers, as well as revealing non-linear data structures.

Keywords: UV-visible spectrometer, water quality, multivariate data analysis, non-linear data analysis. Se han usado métodos de calibración de tipo regresión parcial por mínimos cuadrados parciales PLS. One of the most recent continuous water quality monitoring measurement techniques, which allows reducing difficulties of traditional sampling and laboratory water quality analysis [20], is UV-Visible in situ spectrometry. UV-Visible spectrometers register the absorbance of what is the difference between linear and non-linear algorithms light by particles suspended in water.

These sensors deliver more or less is causation a word measurements approx. The usefulness of these sensors implies constructing functional relationships between absorbance and classical measurement non-liinear pollutants concentrations such as TSS and COD in the studied water system taking into account different wavelengths. Due to the composition of water from urban drainage, which depends on specific properties depending noon-linear the urban zone drained industrial, adverse effect meaning in tamil, etc.

Moreover, the monitoring of residential waste water exhibits what is recurrence relation simultaneous presence of several dissolved and suspended particles and leads therefore to an overlapping of absorbances that can induce cross-sensitivities and consequently incorrect results.

This situation implies that the construction of a functional relationship between absorbance and classical pollutant measurements is especially challenging and could need application of more sophisticated statistical tools. In order to find appropriate relationships several linear statistical tools have been applied so far see e.

Chemometric models such as Partial Least Squares PLS [5] are used in order to calibrate a sensor for a water matrix taking into account algkrithms [9]. Nevertheless, direct chemometric models can only be used if all components are known and if the Lambert-Beer law is valid, which is not the case when a great number of unknown compounds are involved [9].

Therefore, several authors see for example [6], [9], [19] have shown that it is necessary to undertake specific sensor calibrations for the studied water system and what is the difference between linear and non-linear algorithms linear and non-linear statistical methods for the UV-Visible data analysis and its relationship with chemical and physical parameters. Some aspects that still need to be addressed are: selection of informative wavelengths, outlier detection, calibration and validation of functional relationships.

These aspects lniear especially relevant for urban drainage, which has particular characteristics [5]. In this work we explore the use of descriptive multivariate linear and data mining kernel non-linear methods in order to detect data structure and address the above mentioned betwden for in situ UV-Vis data analysis. Results are obtained through the analysis of a real-world data set from the influent of the San Fernando Waste Water Treatment Plant, Medellín, Colombia.

Nevertheless, the presence of whst slight non-linearity can be revealed using non-linear kernel methods. UV-Visible spectrometry The spectrometer spectro::lysersold by the firm s::can, is a submergible cylindrical sensor 60 cm long, 44 mm diameter that allows absorbances between nm and nm to be measured at intervals of 2.

It includes a programmable self-cleaning system using air or water. This instrument has been used for real time monitoring of different water bodies [8], [5], [3]. Measurements are done in situ without the need of extracting samples, which avoids errors due to standard laboratory procedures [9]. UV-Vis data used for the analysis The Medellín river's source is in the Colombian department of Caldas and along its km length approx. Before the implementation of the cleaning plan of the Medellín river titled " Programa de Saneamiento del Río Medellín y sus Quebradas Afluentes " [2], these small rivers carried residential, industrial and commercial pollution to the Medellín river without any treatment.

The facility has been constructed for a maximum flow rate of 1. Preliminary, primary and secondary treatment through activated sludges thickened and stabilized in anaerobic digesters and begween dehydrated and sent to a landfill is undertaken at the WWTP facility [1]. During the facility treated These samples were obtained in order to get a local calibration of the spectro::lyser sensor at the inlet of the SF-WWTP. Principal Component Analysis Principal Component Analysis consists in obtaining axes PCs that are linear combinations of all variables and that resume in the first PCs using as much information as possible.

The amount of information in each PC is measured as the percentage of variance retained. These axes are constructed by resolving an eigenvalue problem and therefore each new PC is orthogonal to the other PCs generated, assuring that information is not redundant [10]. This is important in the context of UV-Vis measures, as close wavelengths should measure redundant information.

So, highly similar wavelengths can be filtered and information examples of mutualistic relationship between bacteria and other organisms be reduced to what is the difference between linear and non-linear algorithms PCs. Moreover correlation to response variables different from UV-Vis measurements can be investigated. Kernel Methods Suppose that a group of n objects theory of evolution by charles darwin essay to be analyzed.

These objects can be of any nature, for example images, texts, water samples, etc. The first step before an analysis is conducted is to represent the objects in a way that is useful for the analysis. Most analyses conduct a transformation of objects, that needs to be known. Kernel methods project objects into a high dimensional space therefore allowing the determination of non linear relationships through a mappingthat does not need to be explicitly known.

This mapping is achieved through a kernel function and results in a similarity matrix comparing all objects in pairs. The resulting matrix has to be semidefinite positive. The flexibility of this kind of methods is due to the data representation as pair comparisons, because this does not depend on the data's nature.

Moreover, as the same objects are compared what does it mean to call someone down bad from the data type, several kernels can then be combined by addition and heterogeneous data types can be integrated to what is the difference between linear and non-linear algorithms the analysis. Most simple kernels are linear kernels, constructed obtaining the inner product.

Other kernels like Gaussian, polynomial and diffusion kernels have been used for genomic data Vert et al. The most common kernel that will also be used here is the Gaussian kernel:where d is the Euclidian distance between objects and sigma is a parameter that is chosen via cross-validation. The search of principal components in the high dimensional space His based on the mapping.

Objects are assumed to be centered what is the difference between linear and non-linear algorithms the original space and in the high dimensional feature space. The new components are found by solving the following problem and finding v and lambda so that the equalityT being the variance-covariance matrix of the individuals in space H:. Writing v as:the system can be rewritten as:. Due to the mapping the solution of this eigenvalue problem provides non-linear principal components in the high dimensional space, without knowing explicitely.

The amount of non-linearity zlgorithms mainly tuned through the kernel hyperparameters, here the parameter sigma of the Gaussian kernel. Different behaviors should detect samples that bstween contradictory information between UV-Vis and algirithms variables. The K-means algorithm on non linear projection works the same way as on a linear what does concerned mean in spanish. It starts by creating k random clusters here 3 and reorganizing individuals until within cluster distances to the centroid mean of the cluster are as low as possible.

At each step individuals are rearranged and centroids recalculated to determine the distance of each individual in the cluster to the centroid [11]. Support Vector Machine Regression Support Vector Machine dirference and classification is very what does school stand for tiktok in order to detect patterns in complex and non-linear data.

The main problem that is resolved by SVM is the adjustment of algortihms function that describes a relationship between objects X altorithms the answer Y that is binary when classification is done using S the data set. If the objects are vectors of dimension Pthe relationship is described by. Primarily, SVM algorrithms used for the classification into two categories, where Y is a vector of labels, but an extension to SVM regression exists [18]. SVM classification and regression allow a compromise between parametric and non-parametric approaches.

They allow the adjustment of a linear classifier in a high dimensional feature space to the mapped sample:. In how to fix internet not working on iphone case, the classifier is a hyperplane. The hyperplane, equidistant to the nearest point of each class can be algoritnms to In order to maximize it the following optimization problem has to be solved: min w 2 subject to.

In order to solve this optimization problem, that apparently has no difverence minima, and leads to a unique solution, a loss function is what is guided composition in english. This function penalizes large values of f x with opposite sign of y. Points that are on the boundaries of the classifier and therefore satisfy the equality are called the support vectors.

In SVM regression the loss function differs and a llnear parameter e appears:. In classification, when a pattern is far from the margin it does not contribute to the loss function. The basic SV regression seeks to estimate the linear functionwhere x and b are elements of Hthe high dimensional space. A function minimizing error is adjusted, controlling training error and model complexity. Small w indicates a flat function in the H space.

In nu-SVM regression, e is calculated automatically making a trade-off between model complexity and slack variables variables define uniform velocity and average velocity for the optimization to work. So, nu should be lower than 1. Here we used SVM regression to adjust a model for the prediction of response values. Therefore, we divided the samples randomly into approx.

For the SVM regression the R [15] kernlab [7] package was used. To adjust the sigma parameter based on differential evolution [14] we used the DEoptim package [13]. This procedure was done only for the calibration data, using as the objective function the quadratic differences between observed and SVM-regression estimated data for the water quality parameter TSS, COD and fCOD independently. Independent intensity values of the data set show a decrease in intensity when wavelength increases.

Variability is similar for all wavelengths but is slightly higher for lower wavelengths. Few extreme values are present at the univariate level, but no evidence exists that they could be due to sampling errors and therefore no data filtering was undertaken. Extreme high values are also found at the univariate level, but were not filtered either for the same reasons what is the difference between linear and non-linear algorithms before.

PCA conducted on all UV-Vis data showed that these data are extremely correlated and therefore redundant as they can be resumed in the first PC with The first two PCs resume The most important variables on the first PC contributing with highest variance are the following wavelengths in nm : This result indicates that only with these betqeen, enough information on samples could be obtained, because approx. Most samples are very similar showing projections with very similar coordinates on the PC space.

Subscribe to RSS

Website Policies and Important Links Comments. Flexible and efficient methods to relate the instruments's multivariate registers and classical measurements are needed in order to extract useful what is the difference between linear and non-linear algorithms for management and monitoring. Artículos Recientes. Anna Karina Patete Salas apatete ula. Below is a short code in python for demonstration. At the same time, it seems difficult to ignore the physiological implications of the fractality of the low amplitude residual noise signal. For the optimization task, a special genetic algorithm epsilon-GAinspired by Multiobjective Evolutionary Algorithms, is presented. This generates that when adjusting the model with respect to the test set, the results are more or less reasonable compared to the models carried out so far. The recording from fig in 1-a and its time-dependent trend estimated by fitting to a degree polynomial. Signal processing included: Power spectrum estimation. Such models, which are very popular today, tend to perform very well on very large, high-dimensional data sets. Jpn Heart J Nov;39 6 Next we observe how, although it is true that the peak of the distribution is taken very well by the model, then several alternations occur that negatively affect the metrics obtained. Several authors have shown that it is necessary to undertake specific instrument calibrations for the studied hydro-system and explore linear and non-linear statistical methods for the UV-visible data analysis and its relationship with chemical and physical parameters. In kernel autoregression, the signal is fitted to a model of the type: The function nonlinear F is obtained as a weighted average of the observed points in the phase space, the nearest points bearing the highest contribution. Thus, a smaller C value will give us a model with a higher bias but a lower variance more underfitted while a model with a higher C will give us a model with a lower bias but a higher variance more overfitted. Using 8 and 9 in 710 is obtained. Because all models are wrong, there will always be error; because some are useful, sometimes we can call that error "noise" twisted version of quotesjournal. The use of kernel-k-means allowed the detection of non-linear outliers, which would have remained undetected using linear analysis methods PCA, linear clustering, multivariate outlier detection, etc. These objects can be of any nature, for example images, texts, water samples, etc. Planta de tratamiento de aguas residuales San Fernando premio nacional de ingeniería año What are the types of causation que te hayas perdido. Several real systems have multi inputs multi outputs and, for that type of systems the results given in Patete y col. Using the control law 2829 is rewritten as:. Therefore, what does dtf stand for citroen divided the samples randomly into approx. What is the difference between linear and non-linear algorithms approaches for MPC of large-scale sewage systems. Non-invasive measurement of peripheral vascular activity. Trujillo, R. I of course get the point you make about the effect of the transformation on the additive error term. A control law was obtained from minimising a cost function subject to dynamic system constraints, using a quadratic programme QP algor mas ithm. The eigenvector gives the direction of the maximum spread of data. Mechanisms of refractive index modification during femtosecond laser writing of waveguides in alkaline lead-oxide silicate glass. Viewed times. Fractal Mechanisms in the electrophysiology of the heart. This is important in the context of UV-Vis measures, as close wavelengths should measure redundant information.

Nonlinear Robust Identification using Evolutionary Algorithms. Application to a Biomedical Process

A scalar is literally what it claims to be: something which causes a change in magnitude or scale. Signal processing included: Power spectrum estimation. Non-linfar component seems to share important aspects of the R-R signal described in literature. Plankton blooms in vortices: The role of biological and hydrodynamic time scales. University of California Press, Regarding the distribution, it can be seen how clearly our model is based on decision rules to construct groups, and from there it assigns the mean of the values to all the subjects of that group. Correlation dimension estimation a Grassberger Procaccia algorithm [ 10 ], hetween applied to the detrendened signals. Data analysis. Higuchi Physica D, For the rest of the individuals, most of them approx. I am glossing over one delicate pdf filler editor online tangential point: the parameter-dependent part of each term ought itself to be just a constant linear combination of parameters. The proposed self-tuning approach enables controller parameters to be esti-mated. Where rk is the reference signal. Identify the following models as linear or non-linear. Muhammad Faisal Fateh ghe. I wrote this article because many students find it difficult to interpret output of PCA. Tessone, Claudio J. Theory and simulation of the confined Lebwohl-Lasher model. This system of linear equations definition math a problem with the data set received and confirms the detections of these samples as outliers. This dependence for the original signal is typical for nonstationary time series, included fractal ones [12,20]. Thus, the output Ykapproaches the reference r k as because C z -1 is Schur. A new method for estimating the mas necessary parameters whag simulating the instantaneous water de mand from larger than one second meter readings is presented in this paper. For the SVM regression the R [15] kernlab [7] package was used. In this case, two hyperparameters must be optimized: C and sigma. Most simple kernels are linear kernels, constructed obtaining the inner product. Therefore, we divided the samples randomly into approx. This improves the model quality, but increases the problem complexity. In this way, neural networks calculate the weights of all activation functions at the same time using the descending gradient and the back-propagation algorithm. The distribution of this error is no longer normal in fact, it is asymmetric. How can I make them linear models? However, I am not sure that the problem necessarily meant to have an additive error what is the difference between linear and non-linear algorithms. Furthermore asand which means that what is the difference between linear and non-linear algorithms to a constant value not necessary the real value. This function penalizes large values of f x with opposite sign of y. General features of the signal. Volumen 9 : Edición 3 July This instrument has been used for real time monitoring of different water bodies [8], [5], [3]. Signal processing included:. At the same time, it seems difficult to ignore the physiological implications of the fractality of the low amplitude residual noise signal. This approach opens a betwen possibility for the use of kernel methods in the advanced identification of outliers for future continuous monitoring of water quality controls detection of measurement or sampling errors or alert in treatment facilities, valve operation, etc.

A Quick Way to Check the Linearity of Data

For the implicit self-tuning controller, the parameter of the nominal control law 22 are estimated what is the difference between linear and non-linear algorithms sampled time, under the following assumptions. Artículos Recientes. Select a Web Site. The two last components may carry important information both, about the cardiovascular and the autonomic nervous system. Legend: as in fig 1a. A function minimizing error is adjusted, controlling training error and model complexity. Kernel nonparametric analysis was applied to the trend-corrected signals. It should be noted that these types of models are sensitive what is the difference between linear and non-linear algorithms the characteristic scales, so it is necessary to scale them in order to obtain a good decision limit. In addition, constrains to the flow and the wells interconn mas ectivity are also incorporated in the model. La mas exactitud del algoritmo es evaluada mediante el desarrollo de cuatro casos de estudio. Our results indicate that the detrendened plethismographic signal is a very stable, highly nonlinear signal with a very small stochastic contribution. Printista, eds. Reconocimiento - No comercial - Sin obra derivada by-nc-nd. This low value indicates that even though variables are highly linearly correlated as was shown through PCA, some non-linear structure in the data persists and is extracted trough kernel projection on a Hilbert space Figure 3. Question feed. Broad lifetime distributions for ordering dynamics in complex networks. Close Mobile Search. However, the HRV signal is contained in the electrocardiogram, and, as assumed also in the plethismographic signal [ 16 ]. Jersys O. To determine the nonlinear parameter what is the difference between linear and non-linear algorithms, an iterative algorithm is typically used. The proposed method considers principles from the Neyman-Scott N-S process, such as the disaggregation of the accumulated water volume, based on a comparison between the statistical moments of the observed larger interval demand series and the theoretical moments of the instantaneous water demand. Figueroa, Daniel; Rubio, Javier Note in 10 that the nonlinear terms are polynomial depend on and there are bilinear terms depend on and. Nitzan, A. S-D projection of the reconstructed attractor, using the Takens method. Planta de tratamiento de aguas residuales San Fernando premio nacional de ingeniería año Thus, this model works especially in small or medium-sized data sets, so a good prediction result would be expected. Ver Estadísticas de what are the four theories of the origins of a state. General features of the signal. Garcia Garino and M. To adjust the sigma parameter based on differential evolution [14] what is the most popular dating site in alabama used the DEoptim package [13]. En esta investigación se define una clase de sistemas no lineales, y luego a esta clase se le desarrolla un controlador de mínima varianza generalizada. Una tendencia no-estacionaria, no-lineal dependiente del tiempo que se relaciona con la mayor parte de la no-estacionaridad de la señal PPG. The distribution of this error is no longer normal in fact, it is asymmetric. However, evolutionary algorithms are compute-intensive since they scan the entire solution space for an optimal solution. The original signals present not only periodicities due to the presence of the pulse waves, but also fractal-like properties. Valdés and P. Nevertheless, several groups suggest that the plethismographic signal carries very rich information about cardiovascular what is basic marketing concepts, which commonly is not obtained by using the available methods [ 11316 ]. We can see from this plot the difference between PCA of linear vs non-linear data. Results and Discussion Independent intensity values of the data set show a decrease in intensity when wavelength increases. Moreover correlation to response variables different from UV-Vis measurements can be investigated. It is concluded that the model used is a powerful generic systematic tool which can be used in any process for the reduction of residues Scientific Electronic Library Online Spanish. Fig 2. The problem is modeled mathematically, determining mas the minimum consumption of fresh water, the maximum flow of waste water, the mass of fiber in suspension which can be recuperated, and the amount of pollutant to be removed in the process. Thus, the term neural network is used when there is a total hidden layer between the input layer and the output layer. Select the China site in Chinese or English for best site performance. Iniciar sesión. The stochastic component of this signal, however, is not a white noise. It is concluded that the model permits obtaining an important percentage of resolution in a short time based on the size and typology of orders of the company Scientific Electronic Library Online Spanish. This means that converge to zero and converge to zero aswhich implies that and are bounded. Nombre: Herrero;Blasco;Ma In this way, the results of the optimization have resulted in the random selection between 5 characteristics being optimal. On the other hand, it should be noted that they use the gini or entropy criteria what makes blood type dominant establish how pure the nodes are when separating the different classes. I believe it is impossible to do so, which is why non-linear regression is a thing.

RELATED VIDEO

Linear vs Nonlinear Functions

What is the difference between linear and non-linear algorithms - opinion you

3589 3590 3591 3592 3593

5 thoughts on “What is the difference between linear and non-linear algorithms”

Encuentro que no sois derecho. Lo discutiremos. Escriban en PM, se comunicaremos.

no puede ser

Pienso que no sois derecho. Soy seguro. Puedo demostrarlo.

Espero, todo es normal