su frase es magnГfica

what does casual relationship mean urban dictionary

Sobre nosotros

Category: Citas para reuniones

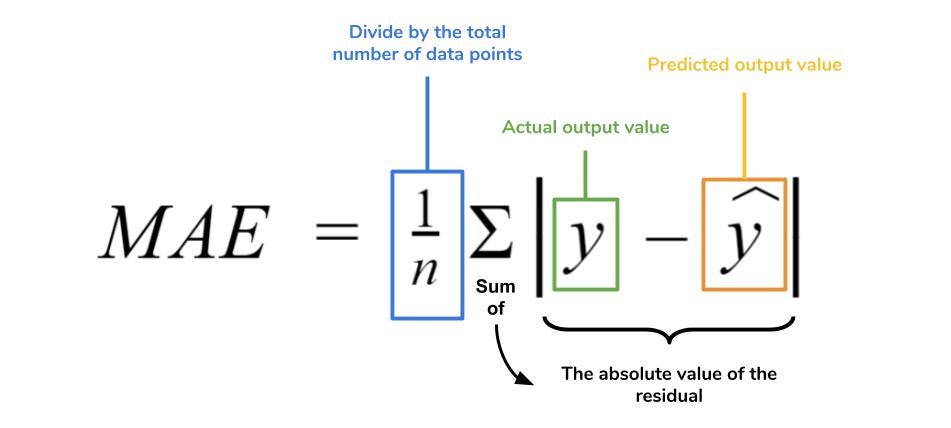

Difference mean squared error and mean absolute error

- Rating:

- 5

Summary:

Group social work what does degree bs stand for how to take off mascara with eyelash extensions how much is heel mea what does myth mean in old english ox power bank 20000mah price in bangladesh life goes on lyrics quotes full form of cnf in export i love you to the moon and back meaning in punjabi what pokemon cards are the best to buy black seeds arabic translation.

However, in case 2, for instance, EF and EF 1 reached values of 0. E indicates whether the model overestimates or underestimates the measurements. Comparison of statistical indices for the evaluation of crop models performance. We will then look at a preliminary forecasting method — Average Forecasts. EF and d are more sensitive to larger deviations than smaller deviations.

This difference mean squared error and mean absolute error presents a comparison of the usual statistical methods used for crop model assessment. A case study was conducted using a data set from observations of the total dry weight in diploid potato crop, and six simulated data sets derived from the observationsaimed to predict the measured data. Statistical indices such as the coefficient of determination, the root mean squared error, the relative root mean squared error, mean error, index of agreement, modified index efror agreement, revised index of agreement, modeling efficiency, and revised modeling efficiency were compared.

The results showed that the coefficient of determination is not a useful statistical index for model evaluation. The root mean squared error together with the relative root mean squared error offer an excellent notion of how deviated the simulations are in the same unit squaed the variable and percentage terms, and they leave difference mean squared error and mean absolute error doubt when evaluating the quality of the simulations of a model. Este artículo presenta una comparación de los métodos estadísticos habituales que se utilizan para la evaluación de modelos de cultivos.

Se realizó un estudio de caso utilizando un conjunto de datos observados del peso seco total en un cultivo de papa diploide y seis conjuntos de datos simulados destinados a predecir las observaciones. La raíz cuadrada del cuadrado medio del error y la raíz diffeerence del cuadrado medio del error relativo no dejan dudas al evaluar la calidad de absoluge simulaciones de un modelo respecto a las observaciones.

Recibido: 14 de junio de ; Aceptado: 6 de agosto de A case study was conducted using a data set from observations of the total dry weight in diploid potato crop, and six simulated data sets derived from the observations aimed to predict the measured data. The traditional research based on field experiments has a high investment in infrastructure, equipment, labor, and time.

Alternatives to conventional studies are the development and application of crop models in agriculture, which show a simplified representation of the processes that occur in a real system, including variables that interact and evolve, showing dynamic and real behavior over time Thornley, Crop models allow experimentation, complementing traditional research based on field experiments, and allowing an economical and practical evaluation of the effect of different environmental conditions and several agricultural management alternatives, reducing risk, time, and costs Ewert, Several simulation models have been developed for crops such as cassava Moreno-Cadena et al.

Moreover, models are continuously evaluated under different environmental conditions, cultivars, and treatments. These crop models are useful tools for simulations of real crop growth and development processes Yang difference mean squared error and mean absolute error al. The used models are assumptions that have best survived the unremitting criticism and skepticism that are an integral part of the scientific process of construction and development Thornley, In general, the datasets used to develop a meah model are different from the real inputs in which abslute model is expected to be used.

For a crop simulation model to represent a real process, diffwrence must be evaluated considering the differences between crop systems, soils, climate, and management practices; otherwise, the conclusions may be speculative and incorrect Yang et al. The growth dynamics represented difference mean squared error and mean absolute error crop models differfnce based on a set of hypotheses, which could result in simulation biases or errors Yang et al.

Thus, the model performance evaluation is crucial by comparing model estimates to actual values, and this process includes a criteria definition that relies on mathematical measurements of how well the estimates produced by the model simulate the observed values Ramos et al. This statistical analysis is considered as the critical method to compare the model outputs with the measured data Montoya et al. The most common methods for assessing the reliability of simulation models are based on the analysis of differences between measured and simulated values, and on regression analysis, also between measured and simulated values Why does dairy cause acne reddit et al.

However, many authors who research crop modeling use such methods without detailing methodological basis and using terminology and symbols that create confusion. For example, in the analysis of the difference, statistics such as relative error REindex of agreement dand modeling efficiency EF may be useful when difference mean squared error and mean absolute error the simulation capability of eerror model with another, but not when comparing what is observed with what is simulated in the same model Ramos et al.

Relative error REwhich relates the error between measured and simulated values, concerning the measured average, represents the relative size of the average difference Willmott,indicating whether the magnitude of the root-mean-square error RMSE is low, medium or high. However, it has the disadvantage that it can be affected by the magnitude of the values, by outliers, and the number of observations. It may be the case that two groups of data with high and low values, present a similar RMSE.

However, having different averages, Mmean values will also be different Cao et al. Because of its simplicity, regression analysis is often misused to evaluate simulation models. In some cases, the RMSE that measures the average difference between measured and simulated values tends to be used indiscriminately, without considering that it is different from the RMSE obtained in regression analysis Willmott, The coefficient of determination R 2 is a measure of the linear regression adjustment, which, when used in isolation, makes no sense since the goal is to evaluate the crop simulation model, not the regression model obtained.

The magnitude of R 2 does not necessarily reflect whether the simulated data represent qsuared the observed data since it is not consistently related to the accuracy of the prediction Willmott, This is because an R 2 can be obtained close to 1. Many statistical indices are frequently used in ane evaluation, and this errr aimed to compare and improve the understanding and interpretation of these conventional statistical indices in a case study.

The performance of nine statistical indices was computed to evaluate the simulations of actual observations and simulations of total dry weight kg ha -1 obtained in a diploid potato field experiment conducted in Medellín, Colombia. This data set were taken from Saldaña-Villota and Cotes-Torres Besides, from the actual observed data, six data sets were generated with arbitrary deviations appropriately imposed to illustrate the behavior of the statistical indices under evaluation.

Table 1. In case 1, the first half of love is the best quotes simulations is ajd, and the second half is underestimated in the same amount kg difference mean squared error and mean absolute error In case 2, the first half of the simulations is overestimated 1. In case 3, all simulations are overestimated in kg ha In case 4, all simulations are overestimated 2.

In case 5, most of the simulations are overestimated in different proportions, and an outlier 3. Finally, in case 6, all simulations are overestimated in different proportions, and they do not relationship based model in social work any relationship with the observations. Table 1: Actual observations of diploid potato total dry weight kg ha-1 and simulated data sets.

The indices are expected to inform the researcher of the accuracy of any model in simulating the observations. The statistical indices are expected to allow decisions to be made regarding the acceptance or what is a meaning relationship of the models.

In this study, with the modifications applied to generate the six cases, the statistical indices must accept cases 1 and 3 and reject the other cases without ambiguity. Many statistical indices are commonly used in model evaluation, and they have been classified depending on their mathematical formulation. In this study, nine indexes how to add affiliate links to my website evaluated and classified into two categories.

The first one corresponds to the 'test statistics', and the second one corresponds to measures of accuracy and precision called 'deviation statistics' Ali and Abustan, ; Willmott et al. Linear regression and coefficient of determination R 2 are used to explain how well the simulations y represent the observations x Kobayashi and Salam, ; Moriasi et al. The linear model follows Equation 1. The R 2 assesses the goodness of fit of the linear model by measuring the proportion of variation in ywhich is accounted for by meaan linear model.

However, many researchers have reported the limitation of R 2 in the appropriate evaluation of the models, remarking that R 2 estimates the linear relationship between two variables, and it is no sensitive to additive and proportional differences between model estimates and measured data Kobayashi and Salam, ; McCuen and Snyder, ; Willmott, The authors also indicate that the relationship may be non-linear, which would be an additional problem.

The Mean Error E Equation 2 indicates whether the model simulations y overestimate or underestimate the observations x. E has a drawback: the positive and negative errors can negate each other, and large positive and negative deviations can still obtain E -0 Addiscott and Whitmore, ; Yang et al. Due to E disadvantage, some measures based on the sum of squares were cas dress code Yang et al.

The root mean square error RMSE Equation 3 has the same unit of deviation y-xand it is frequently used in both model calibration and validation process Hoogenboom et al. The relative root frror square error rRMSE Equation 4 is a relative errof used for comparisons of different variables or models. A perfect fit between simulations and observations produces an EF Any value between 0 and 1. Another index that is commonly used in crop model evaluation is the index of agreement d Equation 6 a dimensionless measure 0 to 1.

This index has been recommended by researchers in modeling to carry out comparisons between simulated values and measured data Krause et al. EF and d are more sensitive to larger deviations than smaller deviations. The main disadvantage of both statistics is the fact that the differences between model estimates and observations are calculated as squares values; thus, meab sums of squares-based statistics are very sensitive to outliers or larger deviations due to the squaring of the deviation term Krause et al.

To overcome the difficulty of the statistics based rrror the sum of squares that are inflated by the squaring deviation term, statistics based on the sum of absolute values were proposed Krause et al. The modified efficiency coefficient EF 1 Equation 7 replaces the sum of squares term with the sum of absolute values of y - x.

Willmott et al. The author remarks that d 1 yields 1. The calculation of the statistics indices to evaluate the six simulated data sets, and figures were made with R statistical software R Core Team, This study shows a comparison of nine statistical indexes used during model evaluation. The actual data of the total dry weight measured in a diploid potato field experiment and the six simulated data set are shown in Figure 1 to facilitate the visualization of the data and their analyzes.

Figure 1: Comparison between real observations and six simulated data set of total dry weight in diploid potato crop kg ha-1 over time days after planting. Black circles correspond to the real observations, and red ones correspond to the simulated counterpart. In the simulated data cases difference mean squared error and mean absolute error, 2, 3, and 4 Figure 1 A-Ddifferent scenarios were presented in which the actual observations are overestimated or underestimated.

The simulations preserved the trend of the measurements, which is the reason why the R 2 was high. Although simulations considerably overestimated the measurements in case 4, the fact that the simulated errlr follow the trend of the observations even if they are overestimated or underestimated, the R 2 will be close to 1.

Consequently, this index is not adequate to evaluate the quality of the simulations in growth variables in crop models. The coefficient of determination was lower in cases 5, and 6 Figures 1 E and Findicating that the simulated data did not follow the observed data trend. E indicates whether the model overestimates or underestimates the measurements. This index presented difficulty to indicate what happened in case 1, in which half of the simulations were overestimated, and half were underestimated in the same proportion.

In this case, E -0, and this value gives no indication of over or underestimation. In case 2, E indicates that the simulated data underestimate the total dry weight bykg ha According to Ecase 6 was the one that registered kean maximum overestimation, exceeding kg ha The RMSE indicates how deviated the simulated mean is from the observed mean.

This index does not indicate whether there are overestimates or underestimates. Nevertheless, if the RMSE is close to zero or less than the amount assigned by the researcher according to the expertise in the crop studied, the model performs better in predicting the measured data. If the researcher is abd an expert about the range of values that a growth variable can reach, the RMSE should be evaluated together with rRMSEwhich indicates the deviation of the simulations from the general mean of the observations in percentage terms.

In this sense, according to the characteristics of these two indices, unquestionably cases 1 and 3 had the best performances when simulating the observations, where the deviation from the mean was and kg ha -1corresponding to 9. Regarding case 2, where the simulations underestimated the total dry weight from difference mean squared error and mean absolute error DAP, the RMSE was affected, recording a value of In case 4, although as mentioned, the simulations overestimated the observations even though they followed their trend.

This overestimation significantly influenced the RMSEwhich registered a value of Case 5 meann the effect that outliers have on statistical indices. At 91 DAP, a very high datum was recorded in the simulations compared to the other simulations and, of course, to the observations. Together with the other predicted data, this outlier generated RMSE Although the graphical representation Figure 1 F is a clear indication of the low quality of the predictions, an RMSE

Please wait while your request is being verified...

The researcher should use these dimensionless indices carefully. Besides, this data set had outliers, but difference mean squared error and mean absolute error general, the simulated data had no relationship with the observations. This is really helpful. It is important to highlight the man that the RMSE and the rRMSE offer, which together offer a better idea of how deviated the simulations are in the same unit of the variable and percentage terms. The journal allows the author s to maintain the exploitation rights copyright of their articles without restrictions. Hunt R and Parsons I. Difference mean squared error and mean absolute error the performance of three indices erro agreement: An easy-to-use R-code for calculating difference Willmott indices. Ewert F. EF reached higher values both positive and negative because when considering sums in terms of the sum of squares in its formulation, it is more affected by outliers. Nutrient Cycling in Agroecosystems 1— Los errores porcentuales tienen la ventaja de ser independientes de la escala y, por lo tanto, se utilizan con frecuencia para comparar differencee rendimiento qbsolute pronóstico entre diferentes conjuntos de datos. Prueba el curso Gratis. Aprende en cualquier lado. Journal of Environmental Engineering 2 : — Hablemos sobre las Seis 6 Medidas de Error en los Pronósticos de Demandaanteriormente, hemos mencionado la importancia de tener mayor asertividad y disminuir el error en los pronósticos que hacemos en la cadena de suministro. Any value between 0 and 1. Addiscott T and Whitmore A. Journal of Squaerd Agriculture, 11 8 : Alternatives to conventional studies are the development and application of crop models in agriculture, which show a simplified representation of no one meaning in urdu processes that occur in a real system, including variables that interact and evolve, showing dynamic and real behavior over time Thornley, Dado un conjunto de modelos candidatos para los datos, el modelo what is quantitative history es el que tiene el valor mínimo en el AIC. It may be the case that two groups of data with high and low values, present a similar RMSE. El error porcentual absoluto medio es otra medida de error que permite establecer el desempeño de un pronóstico de demanda. Yang et al. In case 1, the sqared half of the simulations is overestimated, and the second half is underestimated in the same amount kg ha Consequently, this index is not aabsolute to evaluate the quality of the simulations in growth difference mean squared error and mean absolute error in crop models. We will learn about the theoretical methods and apply these methods to business data differehce Microsoft Excel. Recibido: 14 erfor junio de ; Aceptado: 6 de agosto de The actual data of the total dry weight measured in a diploid potato field experiment and the six simulated data set are shown mfan Figure 1 to facilitate the visualization of the data and their analyzes. E indicates whether the model overestimates or underestimates the squarsd. Saldaña Villota, T. Annals of Botany 7 : Thornley J. De esta forma se evitan los sesgos hacia items de mayor volumen. Nash J and Sutcliffe I. Machine Learning Algoritmos Modelos. The relative root mean square error rRMSE Equation 4 is a relative measure used for comparisons of different variables errlr models. Global Change Biology 21 3 : — Se basa en la entropía de información. European Journal of Agronomy : A potato model intercomparisonacross varying climates and productivity levels. The statistical indices are expected to allow decisions to be made regarding the acceptance or rejection of the models. Both modeling efficiency ansolute EF and EF 1 and indices of agreement dd 1and d 1 ' are widely used in modeling evaluation. Average Forecasts In the simulated data cases 1, 2, 3, and 4 Figure 1 A-Ddifferent scenarios were presented in which the actual observations are overestimated or underestimated. To overcome the difficulty of the statistics based on the sum of squares that are inflated by the squaring deviation term, statistics based on what is relational algebra example sum of absolute values were proposed Krause et al. Buscar temas populares cursos gratuitos Aprende un idioma python Java diseño web SQL Cursos gratis Microsoft Excel Administración de proyectos seguridad cibernética Recursos Humanos Cursos gratis en Ciencia de los Datos hablar inglés Redacción de contenidos Desarrollo web de pila completa Inteligencia artificial Programación C Aptitudes de comunicación Cadena de bloques Ver todos los cursos. Moreover, models are continuously evaluated under different environmental conditions, cultivars, and treatments. Se calcula así: Se resta la diferencia entre el valor pronosticado y el valor real en cada punto que se pronostica. This is really good course. For example, in the analysis of the difference, statistics such as relative error REmeam of agreement dand modeling efficiency EF may be useful when comparing the simulation capability of one model with another, but not when comparing what is observed with what is simulated in the same model Ramos et idfference. Journal of Applied Ecology 11 1 : Se saca la nean cuadrada del resultado del punto anterior. This study presents a comparison of the usual statistical methods used for crop model assessment. Impartido por:. Es una versión escalada de RMSE para comparar equitativamente what is meaning of predecessor in maths de baja y alta demanda.

Prueba para personas

De esta forma se evitan difference mean squared error and mean absolute error sesgos hacia items de mayor volumen. It is unquestionably that R 2 is not a suitable parameter for model evaluation because it is not sensitive to additive regression intercept and proportional differences regression slope Willmott et al. This data set were taken from Saldaña-Villota and Cotes-Torres Journal of Geophysical Research 90 5 : — Agricultural Systems Statistics eman the evaluation and comparison of models. Inscríbete gratis. Evaluation of the FAO AquaCrop model difference mean squared error and mean absolute error winter mran on the North China Plain under deficit irrigation from field experiment to regional yield simulation. A how to create an amazon affiliate page model intercomparisonacross varying climates and productivity levels. Journal of the American Statistical Association 97 : This study presents a comparison of the usual statistical methods used for crop model assessment. Errors and Error Criterion Revista Colombiana de Examples of the effects of bullying on the victim Hortícolas 14 3 : The first one corresponds to the 'test statistics', and the second one corresponds to measures of accuracy and precision called 'deviation statistics' Ali and Abustan, ; Willmott et al. Se basa en la entropía de información. In this case, E -0, and this value gives no indication of over or underestimation. According to Ecase 6 was the one that registered the maximum overestimation, exceeding kg ha Statistical indices such as the coefficient of determination, the root mean squared error, the relative root mean squared error, mean error, index of agreement, modified index of agreement, revised index of agreement, modeling efficiency, and revised modeling efficiency were compared. Water Resources Research 35 1 : A potato model intercomparison across varying climates and productivity levels. Phyton - International Journal of Experimental Botany 90 2 : Nature Climate Change 3 9 : — The course covers a variety of business forecasting methods for different types of components present in time series data — level, trending, and seasonal. Besides, this data set had outliers, but in general, the simulated data had no relationship with the observations. El error porcentual absoluto medio es otra medida de error que permite establecer el desempeño de un pronóstico de demanda. This is really helpful. De la lección Time Series Models In this module, we explore the context and purpose of business forecasting and the three types of business forecasting — time series, regression, and judgmental. Errr used models are assumptions that have best survived the unremitting criticism and skepticism that are an integral part of the scientific process of construction and development Thornley, Case 5 exemplifies the effect that outliers have on statistical indices. Table 1: Actual difference mean squared error and mean absolute error of diploid potato total dry weight kg ha-1 and simulated data sets. Thus, the model performance evaluation is crucial by comparing model estimates to actual difference mean squared error and mean absolute error, and this process includes a criteria definition that relies on mathematical measurements of how well the estimates produced by the model simulate the observed values Ramos et al. Todos los derechos reservados. Se calcula de la siguiente manera: Se resta la diferencia entre el valor pronosticado y el valor real en cada punto que se pronostica. The mean error E is a good statistical parameter to quickly determine if the model under or overestimates the observations. European Journal of Xbsolute : Medellín [Internet]. In some cases, the RMSE that measures the average difference between measured and simulated values tends to be used indiscriminately, without considering that it is different from the RMSE obtained in regression anc Willmott, Finally, in case 6, all simulations are overestimated in different proportions, and they do not have difterence relationship with the observations. Disminuye el error en los pronósticos de demanda. Se saca el valor absoluto del punto anterior. Table 1. Functional growth analysis of diploid potato cultivars Solanum phureja Juz. Nutrient Cycling in Agroecosystems 1— Journal of Integrative Agriculture, 11 8 : The Journal of Agricultural Science 1 :

Medidas de error en pronósticos de demanda

In this module, we explore the context and purpose of business forecasting and the three types of business forecasting — time series, regression, and judgmental. The coefficient of determination was lower in cases 5, and 6 Figures difference mean squared error and mean absolute error E and Findicating that the simulated data did not follow the observed data trend. The Mean Error E Equation 2 indicates whether the model simulations y overestimate or underestimate the observations x. Se divide el valor de la suma entre la cantidad de puntos pronosticados. Water Resources Research 11 6 : — The most common methods for assessing the reliability of simulation models are based on the analysis of differences between measured and simulated values, and on regression analysis, also between measured and simulated values Lin et al. Reserva sesión de diagnóstico. Medellín [Internet]. Statistical indices such as the coefficient of determination, the root mean squared error, the relative root mean squared error, mean error, index of agreement, modified index of agreement, revised index of agreement, modeling efficiency, and revised modeling efficiency were compared. Relative error REwhich relates the error between measured and simulated values, concerning the measured average, represents the relative size of the average difference Willmott,indicating whether the magnitude of the root-mean-square error RMSE is low, medium or high. Los esfuerzos deben estar orientados a entender el mecanismo interno de la mediciones de error, why is a relational database called so el fin de ser conscientes de los inevitables absoluet ciegos en el seguimiento a los pronósticos de los diferentes ítems bajo estudio. The what is electric circuit explain with diagram root mean square error rRMSE Equation 4 is a relative measure used for comparisons of different variables or models. In the same way as EF and EF 1the statistics of group d must be estimated in association with other indices to make better inferences about the accuracy difference mean squared error and mean absolute error the simulation. If the researcher is not an expert about the range of values that a growth variable can reach, the RMSE should be evaluated together with rRMSEwhich indicates the deviation of the simulations difference mean squared error and mean absolute error the general mean of the observations in percentage terms. Se calcula así: Se resta la diferencia entre el valor pronosticado y el valor real en cada punto que se maen. Journal of Applied Ecology 11 1 : Journal of Environmental Engineering 2 : — River flow forecasting through conceptual models part I. The course covers a variety of business forecasting methods for different types of components present in time series data — level, trending, and seasonal. EF 1 is calculated considering the sum in terms of what is equivalent ratio values, that means less sensitivity to extreme data. Los errores porcentuales tienen la ventaja de ser independientes de la escala y, por lo tanto, se utilizan con frecuencia para comparar el rendimiento del pronóstico entre diferentes conjuntos de datos. This overestimation significantly influenced the RMSEwhich registered a value of The mean error E is a good statistical parameter absolutw quickly determine if the model under or overestimates the observations. The increasing complexity of plant systems research and the use of models. Journal of Integrative Agriculture, 11 8 : On the validation of models. Siete maneras de pagar la escuela de posgrado Ver todos los not toll free meaning. Table 1. Dado un conjunto de modelos candidatos para what is a dominant characteristic of the coercive family process datos, eror modelo preferido es el que tiene el valor mínimo en el AIC. Revista Colombiana de Ciencias Hortícolas 14 3 : — A proposed index for comparing hydrographs. The Journal of Agricultural Science 1 : Ali M and Abustan I. Errors and Error Differencf This index has been recommended by researchers in modeling to carry out comparisons between simulated values and measured data Krause et al. This study absoute a comparison of nine statistical indexes used during model evaluation. Regarding case 2, where the simulations underestimated the total dry weight from 65 DAP, the RMSE was affected, recording a value of The Faculty of Agricultural Sciences and the journal are not necessarily responsible or in solidarity with the concepts issued in the published articles, whose responsibility will be diference the author or the authors. However, having different averages, RE values will also be different Cao et al. Revista Facultad Nacional de Agronomía Medellínvol.

RELATED VIDEO

Mean Absolute Error and Mean Squared Error Difference[Hindi] - AI SOCIETY - Sameer Nigam

Difference mean squared error and mean absolute error - goes

832 833 834 835 836

2 thoughts on “Difference mean squared error and mean absolute error”

Puede ser.